“In April 2016, at the height of the deadliest drug epidemic in U.S. history, Congress effectively stripped the Drug Enforcement Administration of its most potent weapon against large drug companies suspected of spilling prescription narcotics onto the nation’s streets,” says the article by Scott Higham and Lenny Bernstein. “A handful of members of Congress, allied with the nation’s major drug distributors, prevailed upon the DEA and the Justice Department to agree to a more industry-friendly law, undermining efforts to stanch the flow of pain pills…”

The WaPo story goes on:

The law was the crowning achievement of a multifaceted campaign by the drug industry to weaken aggressive DEA enforcement efforts against drug distribution companies that were supplying corrupt doctors and pharmacists who peddled narcotics to the black market. The industry worked behind the scenes with lobbyists and key members of Congress, pouring more than a million dollars into their election campaigns.

Of course, knowing that the Washington Post is capable of fabricating fake sources out of thin air — as in the paper’s fake news “Russia conspiracy” hoax narrative — we have to take all this with a grain of salt. Also keep in mind that, with this story, the Washington Post is asserting the existence of a massive drug cartel conspiracy involving Congress, drug companies and the DEA.

Ten years ago, I was ridiculed for asserting much the same thing, yet today this news blankets the pages of the Washington Post. Let’s look more closely at the structure of this drug cartel conspiracy the Post says is operating in America today, because as it turns out, WaPo actually got this story right.

Republican lawmakers are front men for Big Pharma’s illicit drug cartel

The Big Pharma opioid drug cartel protection racket was put in place by Rep. Tom Marino, a Republican, says the Post. That same story also names Republican Senator Orrin Hatch as complicit in the negotiation of the final language of the bill. Knowing the extreme dishonesty of the Washington Post’s fake journalism, there were probably all sorts of Democrats involved in this bill as well, but they didn’t bother to include them in the story, since everything the Post publishes is engineered to demonize Republicans rather than report all the relevant news facts.“The new law makes it virtually impossible for the DEA to freeze suspicious narcotic shipments from the companies,” says the Post. “Political action committees representing the industry contributed at least $1.5 million to the 23 lawmakers who sponsored or co-sponsored four versions of the bill,” the article says, once again refusing to name any Democrats who received money from the drug industry. However, the Post does admit that President Obama signed it into law, adding that “top officials at the White House and the Justice Department have declined to discuss how the bill came to pass.”

Loretta Lynch, former attorney general and now widely known to be a treasonous cover-up artist who secretly met with Bill Clinton before shutting down any prosecution of Hillary Clinton for obstruction of justice (she deleted 33,000+ emails, remember?), “declined a recent interview request,” reports the Post.

The Washington Post runs head first into a massive government cover-up

“The DEA and Justice Department have denied or delayed more than a dozen requests filed by The Post and ’60 Minutes’ under the Freedom of Information Act for public records that might shed additional light on the matter,” says the Post.When the Washington Post attempted to interview Rep. Tom Marino, his staff called the Capitol Police as a warning to reporters. Blocked at every turn, the Post says it is now suing the DOJ for documents that should have been released under the Freedom of Information Act (FOIA).

In other words, the Washington Post, a pro big-government paper that almost universally believes government can do no wrong, is suddenly finding out just how corrupt, incompetent and dangerous a government racket can truly become. When big pharma’s billions of dollars get funneled into the pockets of greedy Washington lawmakers, the health of the entire nation is put at risk while powerful corporations are granted a kind of “legal immunity” while dealing dangerous drugs.

As the Post reports:

Today, Rannazzisi is a consultant for a

team of lawyers suing the opioid industry. Separately, 41 state

attorneys general have banded together to investigate the industry.

Hundreds of counties, cities and towns also are suing. “This is an

industry that’s out of control. If they don’t follow the law in drug

supply, and diversion occurs, people die. That’s just it, people die,”

he said.

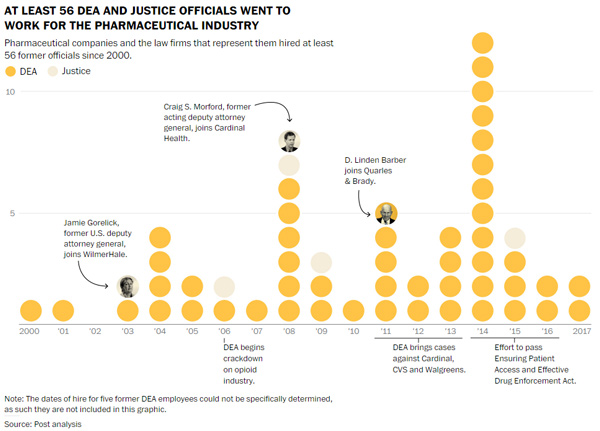

A key reason for the cover-up, of course, is that government officials routinely take jobs with the very same drug companies they previously regulated. Via the Post:

In 2011, Linden Barber

left the DEA to join the Washington, D.C., office of the law firm

Quarles & Brady. He started a practice representing drug companies.

“If you have a DEA compliance issue or you’re facing a government

investigation,” he said in a promotional video for the firm, “I’d be

happy to hear from you.”

Barber’s move turned out to be a key

moment in the struggle between drug companies and the government, but it

was far from the only one. Dozens of top officials from the DEA and

Justice Department have stepped through Washington’s revolving door to work for drug companies.

The DEA, in other words, quickly became nothing more than an extension of the Big Pharma drug cartel. This is known as a regulatory “captive” structure, where regulatory agencies such as the FDA,

EPA, USDA and DEA very quickly become “captured” by the very industry

they once claimed the regulate. As Natural News readers know very well,

nearly every federal regulatory body has already been overrun by

powerful industry interests.The EPA, for example, is one of the top polluters in America, routinely “legalizing” toxic pesticides and herbicides that poison consumers and the environment. The USDA is a front group for the GMO biotech industry, and the FDA is nothing but a fake science cover for the corrupt, criminally-run pharmaceutical industry. The CDC, similarly, is a fake science front for the vaccine industry.

As far as the DEA goes, the independent media has been publishing stories for years that document how the DEA is running the drugs in America. It’s the DEA that grants certain drug cartels immunity from prosecution, of course, while taking down competing organizations in an effort to build near-monopoly drug cartels. Asset seizure laws also allowed the DEA to profit from taking down drug-running cells that were previously allowed to grow and prosper in order to build up assets that eventually became worth seizing. In effect, the DEA has been running a “drug cartel farming operation” in America.

Now, thanks to the Washington Post’s investigation, we learn that the DEA directly negotiated with the drug industry to grant opioid manufacturers selective immunity from criminal seizure and prosecution. Is anyone really surprised? This has been the DEA’s business model for decades.

The following graphic, published by the Washington Post, reveals that at least 56 DEA and Justice Dept. officials left their government jobs to work for the pharmaceutical industry:

In other words, America has become a narco-pharma state, and the infiltration of government by powerful, drug-dealing corporations is now complete. The drug pushers are running Congress and the regulators. They’re also running nearly the entire mainstream media, which is why independent media organizations like Natural News were a full decade ahead of the Washington Post in sounding the alarm on this sort of collusion. (What the mainstream media is just now waking up to, Natural News already reported in 2007 or earlier.)

Congress keeps medical marijuana criminalized to protect the profits of the corrupt opioid drug industry

Once you fully grasp the depth of collusion between the opioid drug cartels, Congress and the DEA, it’s not difficult to figure out why they’ve all conspired to keep medical marijuana, CBD and hemp extracts illegal at the federal level. The protection racket requires anything that competes with prescription opioids to be criminalized.It’s all about limiting consumers’ options and funneling them into a life of personal addiction and Big Pharma profits. As long as the money keeps rolling in, federal workers will keep taking jobs with the drug industry, exploiting their contacts and influence in Washington to keep their toxic, deadly drugs flowing into the hands of vulnerable Americans. At the same time, every effort will be undertaken to keep medical marijuana illegal, thereby denying citizens and veterans an affordable, safe and highly effective option for pain control.

It has taken until 2017 for the mainstream media to finally admit all this is happening. The deep state collusion and conspiracy is now finally being covered by the Washington Post and 60 Minutes. Just remember that Natural News warned America about all this a decade ago and was ridiculed for believing in “conspiracy theories.”

Suddenly, it seems, the narco-pharma-government industrial complex is no longer a “theory.” It’s a business model for corporate America.

Read more: https://www.naturalnews.com/2017-10-16-washington-post-u-s-congress-engineered-dea-racket-to-protect-big-pharmas-opioid-drug-giants.html